Designing machine integration that operators can trust.

Industrial software sits between the machine and the operator. When it is wrong, production stops and the cost is immediate. That is why delivery has to be grounded in how the line actually runs, not just in how the requirements were written.

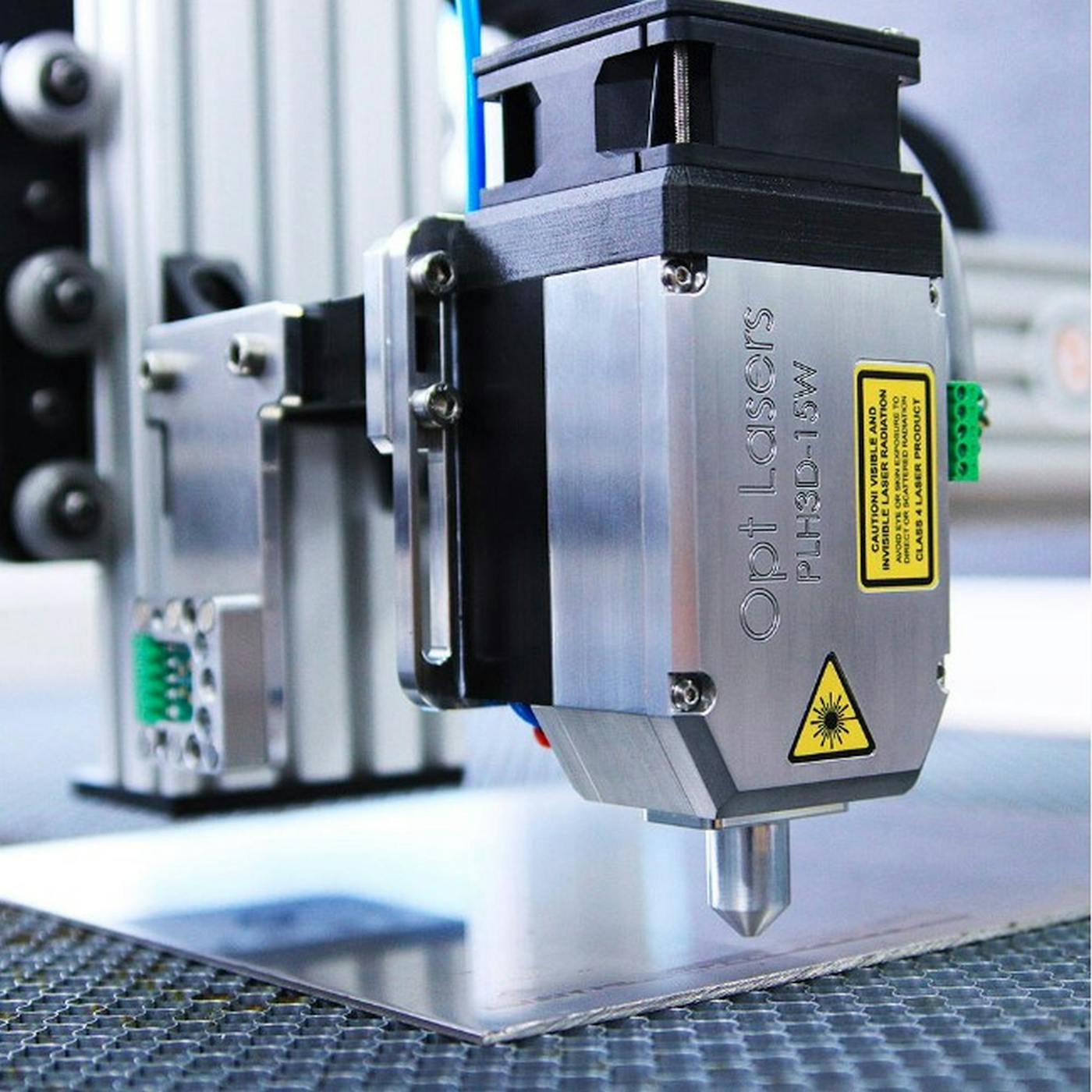

We have delivered systems that connect precision equipment, production tooling, and operator workstations. The common thread is always the same: a dependable driver layer, clear visualization of what the machine is doing, and tooling that lets operations solve issues without waiting on engineering.

Start with the production reality

Before architecture, we map how jobs are created, queued, approved, and released. Changeovers, calibration cycles, manual overrides, and safety interlocks are the moments where software gets tested. A good design anticipates those moments and turns them into supported states instead of exceptions that only experts can handle.

That means aligning with the actual roles on the shop floor. Operators need fast feedback and clear next steps. Supervisors need visibility into throughput and quality. Maintenance teams need diagnostic context that points to root causes, not just a generic alarm.

Driver layers that survive the shop floor

Machine integration is not a one-time connector. It is a contract that has to remain stable across firmware versions, different machine models, and varying plant conditions. We build driver layers that isolate device protocols behind predictable state machines, with well-defined recovery paths for timeouts, partial jobs, and safety stops.

In multi-machine environments, the driver layer must also normalize capabilities so the rest of the system does not branch for every device. That means capability discovery, configuration profiles, and a simulation mode for testing without hardware. When a machine goes offline, operations should have a safe fallback and a clear reason why.

Visualization, CAM and CAD workflows, and toolpath reliability

Industrial software is often anchored by 3D visualization. Operators and engineers rely on a clear view of toolpaths, geometry, and machine envelopes to avoid costly errors. We have delivered 3D rendering and visualization components that keep performance stable while handling large models, high-detail parts, and multiple viewpoints.

When CAM and CAD workflows are part of the system, data integrity is critical. Units, coordinate systems, and tool orientation must remain consistent from import to simulation to execution. We design validation steps that catch geometry issues early, and we build preview and simulation tooling that mirrors machine behavior so operators can trust the output before committing a job.

Integration with planning and quality systems

Production data only becomes useful when it flows into MES, ERP, and quality systems in a consistent format. We build interfaces that capture job context, serials, measurement data, and non-conformance reasons so downstream teams can trust the numbers. That includes buffering and retry patterns for shop-floor networks, plus clear data ownership so there is no ambiguity between machine readings and manual adjustments.

Release discipline and long-term support

Industrial platforms live far longer than a typical software product. Upgrades often happen during narrow maintenance windows, and the cost of failure is high. We plan for versioned configurations, backward-compatible driver updates, and phased rollouts that keep production running while new features land.

That discipline extends to documentation and training. When a new operator starts or a new machine model is introduced, the software should remain intuitive and the operational playbooks should already exist. Reliable delivery is as much about the lifecycle as it is about the initial release.

Operational tooling and diagnostics

The most valuable industrial platforms give operations the ability to solve problems fast. That means rich status telemetry, event logs that read like a timeline, and diagnostic screens that are built for the people who use them every day. We favor structured event models that make it easy to filter by job, machine, or error type.

We also plan for supportability. Remote access is not always possible, so local diagnostics, exportable reports, and reproducible test cases become essential. When a line is down, the difference between vague logs and actionable traces can be hours of lost production.

Performance testing in industrial systems goes beyond throughput. It covers cold starts, long-running stability, and behavior under degraded network conditions. We simulate those scenarios early and validate that the system stays responsive for operators, because slow interfaces can be just as disruptive as downtime.

What strong industrial delivery looks like

- Driver abstractions that protect the application from protocol changes.

- Toolpath preview and simulation that reflect actual machine constraints.

- 3D visualization that stays responsive with large models.

- Operator experiences that simplify recovery and reduce escalation.

- Diagnostics and telemetry that support continuous improvement.

Industrial software has to be stable, explainable, and resilient. When we deliver for this sector, we focus on the whole chain from visualization to drivers to operations tooling, because trust is built across the workflow, not at a single interface.